Few brands have fallen further faster in the past 18 months than America and AI. Last week, I wrote about the reckoning I see coming for America. This week, let’s talk about a reckoning I don’t believe will happen: the AI job apocalypse. Every generation gets its “machines will take your job” panic. This one just comes with better PR and a bigger balance sheet. The AI job apocalypse isn’t data-driven — it’s narrative-driven, engineered by people who profit when you’re scared. Fear is the product. Capital is the outcome.

Wash, Rinse, Repeat

I believe that, similar to every other technological innovation in history, AI will inspire job destruction that will result in an increase in productivity, profits, reinvestment, and (wait for it) jobs. The relevant question isn’t how many jobs we’ll lose / gain, it’s whether the velocity of disruption will overwhelm that period of adaptation and recovery. There are three scenarios: The AI bubble bursts; AI delivers as promised, but on a slower timeline; and AI disruption comes faster than the market can adapt and respond.

Labor Market Narratives

Recently, Anthropic CEO Dario Amodei warned that 50% of entry-level tech, legal, consulting, and finance jobs will be completely wiped out within five years. Last year, he told Axios the “white-collar bloodbath” could spike unemployment to 20%. In 2023, when the AI narrative felt more optimistic, Elon Musk said, “There will come a point where no job is needed … AI will be able to do everything.” In 2021, a year before launching ChatGPT, Sam Altman wrote, “The price of many kinds of labor will fall toward zero once sufficiently powerful AI joins the workforce.” Translation: AI is an extinction-level event for workers … according to those who benefit most from AI being an extinction-level event.

Their story is as old as the Industrial Revolution. In Narrative Economics: How Stories Go Viral and Drive Major Economic Events, Nobel Prize-winning economist Robert Shiller argued that fears about machines replacing human labor contributed to 19th century economic downturns. Later, science fiction reinforced the narrative, feeding the incorrect belief that automation caused the Great Depression. Fears about the rise of computers exacerbated the double-dip recession of the early 1980s. The danger, according to Schiller, isn’t labor disruption, but the narrative’s negative feedback loop. “The economic hardships created by a temporary recession or depression are mistaken for the job-destroying effects of the machines, which creates pessimistic economic responses as self-fulfilling prophecies.”

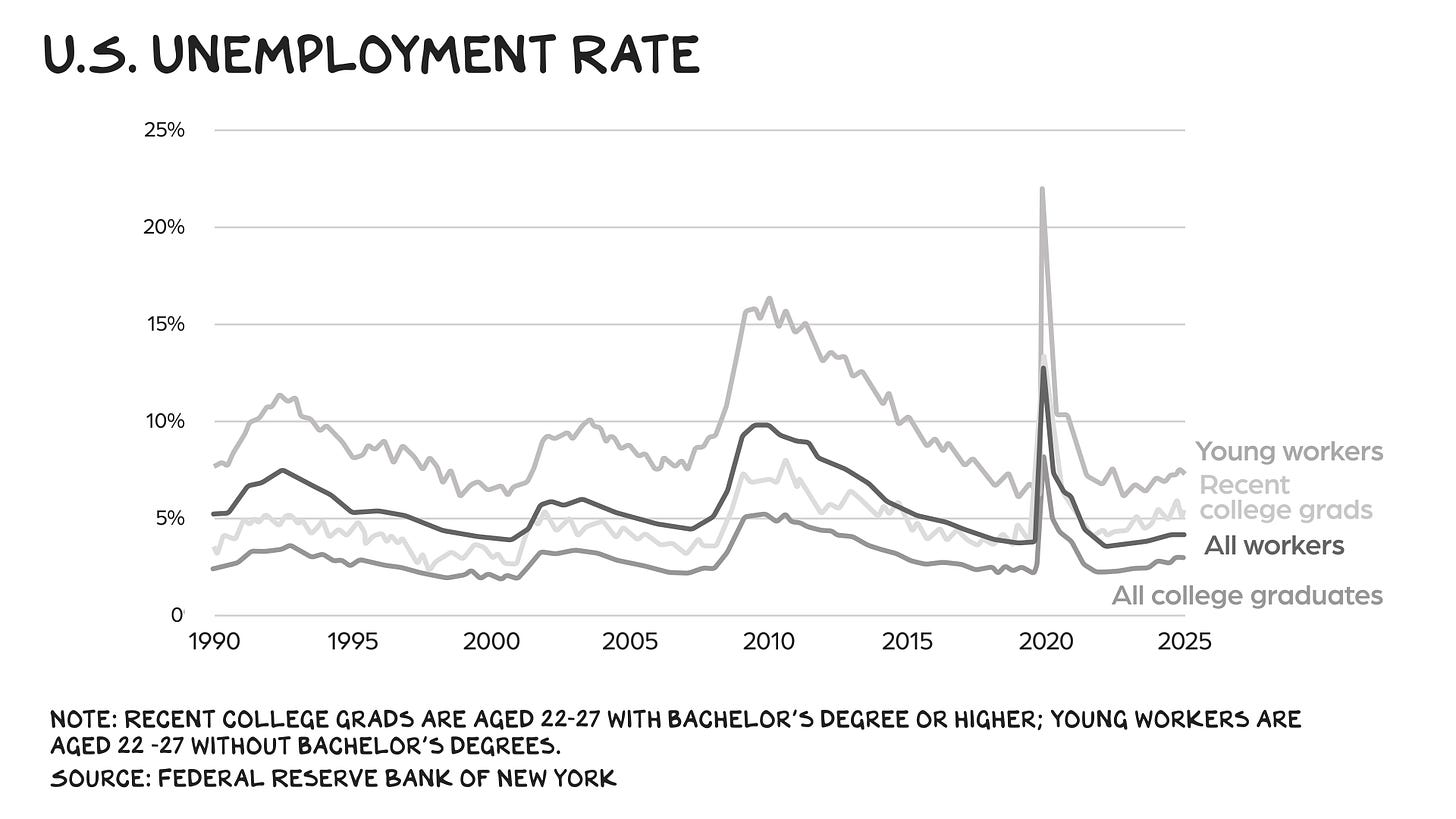

I believe we have the makings for the kind of self-fulfilling prophecy Schiller warned about, as AI-washing masks inflation, tariffs, and over-hiring. Consider tech workers, the supposed canaries in the coal mine. Net technology employment in the U.S. grew from 8.7 million in 2020 to 9.6 million in 2023 and has remained flat since then. Not great, but by no means apocalyptic. Oracle, which laid off 18% of its workforce in March and is projecting negative cash flow until 2030, isn’t capturing AI efficiencies, it’s trading people for chips. Last month’s announcement that Meta would cut 10% of its workforce fed AI anxiety, but in reality Meta is returning to its 2021 headcount. Microsoft’s 7% layoff target would reduce its headcount to 2022 levels, but even after those cuts, Microsoft would still have 47% more workers than it did the year before the pandemic. Since xAI’s 2023 founding, its headcount has grown to an estimated 5,000 people. In March, Musk announced that Tesla would increase headcount, adding, “the output per human at Tesla is going to get nutty high.” The following month, Tesla laid off 10% of its workforce due to poor sales. What we’re seeing isn’t the prelude to a job apocalypse, but a low-hire, low-fire labor market where unemployment rates for tech workers and everyone else are converging around the Fed’s target rate of 4%.

Progress Is Turmoil

Catastrophizing is a narrative device the hyperscalers deploy to divert capital flows to them and justify their capex. Every new technology in history has gone through a similar arc of creative destruction. I don’t see why AI is any different. As economist Joseph Schumpeter observed in 1942, “economic progress, in a capitalist society, means turmoil.” So far, the turmoil attributed to AI has been more hot air than hard data.

Bubble (Scenario 1)

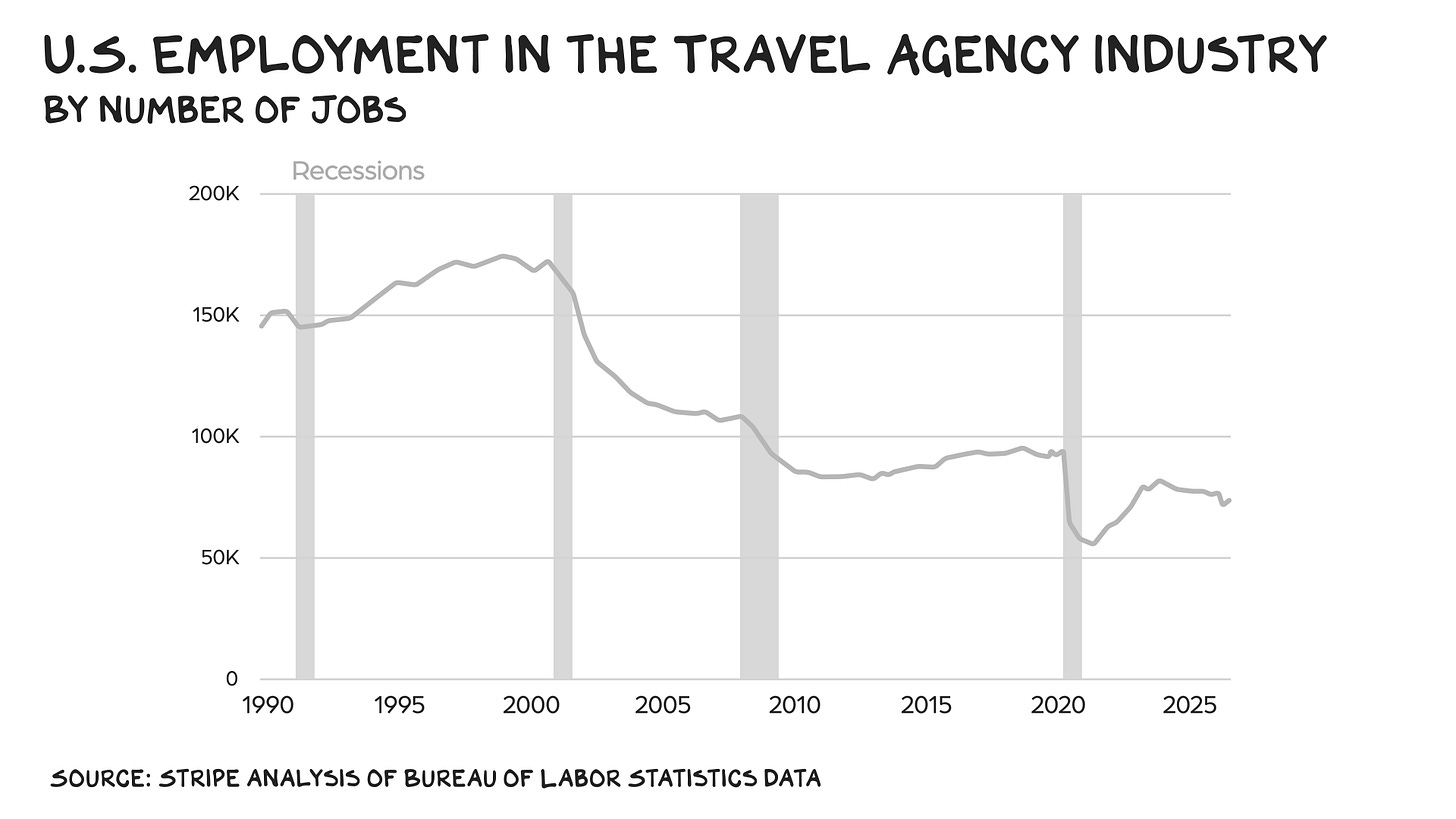

Last fall, I wrote that America is one big bet on AI, as the Mag 10 account for 40% of the S&P’s market cap. Since ChatGPT launched in November 2022, AI-related stocks have registered 76% of the S&P 500’s return, 87% of earnings growth, and 90% of capital spending growth. If AI sneezes, the rest of the economy will catch a cold, i.e., plunge into recession. Based on Schiller’s analysis, we’d likely blame AI. Nevertheless, according to Ernie Tedeschi, chief economist at Stripe and former chief economist for the White House Council of Economic Advisers, layoffs come in “recessionary bursts,” rather than the moment technology renders a profession obsolete. “Widespread displacement of travel agents didn’t happen immediately during the dot-com boom,” Tedeschi wrote. “Rather, it was the bust that drove displacement.” When the economy recovered, however, professions rendered obsolete by technology didn’t return to pre-downturn levels. But the profession doesn’t entirely disappear, either. Travel agents still exist, though they’re more sensitive to future downturns relative to the broader labor market, suggesting that as jobs gradually disappear, more workers pivot.

Jevon’s Paradox (Scenario 2)

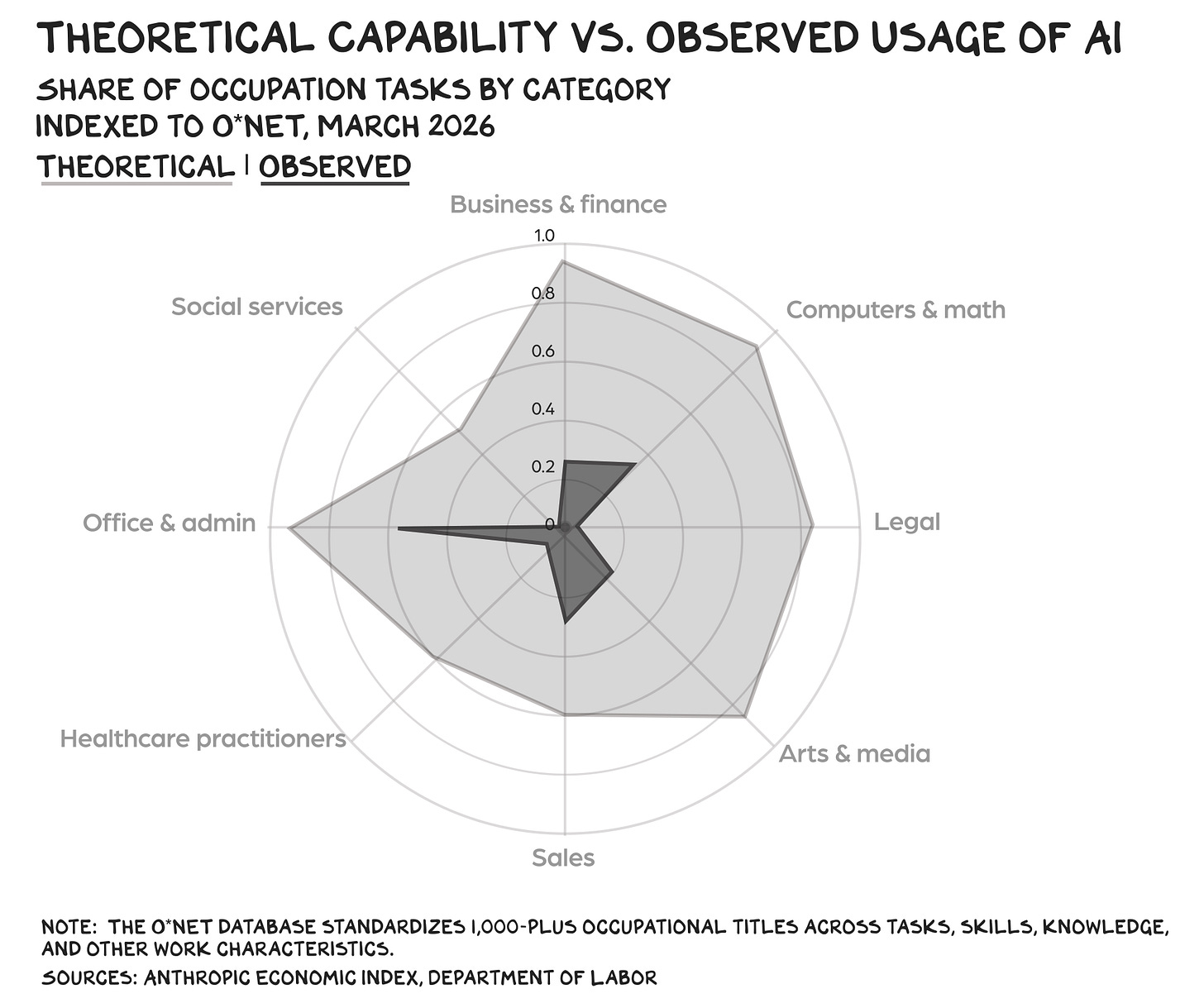

Maybe there isn’t a bubble, or if there is, maybe it doesn’t burst. (Bubbles are visible only in retrospect.) Assuming the velocity of recovery outpaces the disruption, new efficiencies will lead to increased productivity, resulting in rising margins, funding new businesses, employing people in jobs that didn’t previously exist, expanding growth. This is Jevon’s paradox. When a resource becomes dramatically cheaper to use, we don’t use less of it — we find a million new uses for it. If that sounds painless, keep reading. In March, Anthropic published the most detailed empirical map yet of AI’s penetration into the labor market, finding that in business and finance occupations, AI could theoretically cover 94% of tasks — tied with occupations in computers and math. Pain is on the horizon, as tasks that can be automated will be automated during the next downturn.

But the tasks professionals perform have never been fixed, according to Eldar Maksymov, an accounting professor at Arizona State University. After the release of the first electronic spreadsheet in 1979, people predicted accountants would face mass unemployment. Instead, after adjusting for population growth, the number of accountants increased 4x over the next 40 years. “In every major occupational group that adopted computers heavily, employment grew faster than in groups that did not,” Maksymov wrote. “Computers eliminated specific tasks within jobs — but the resulting cost reductions created so much new demand that the occupations expanded overall.” Looking at AI, he concludes that the future of every knowledge profession hinges on a single question: Is human demand for analysis, oversight, and assurance elastic?

I believe it is. Case in point: computer programmers. They’re coding less and thinking bigger, according to journalist Clive Thompson, who interviewed more than 70 programmers in Silicon Valley and at small firms across the U.S. As he noted, “a coder is now more like an architect than a construction worker.” One executive Thompson interviewed put it this way: “I have never met a team at Google who says, ‘You know, I’m out of good ideas.’ The answer is always, ‘The list of things I would like to do is nine miles longer than what we can pull off.’” But as the cost of execution drops, new demand will likely come from areas that previously didn’t have access to programmers. “Several developers suggested that the number of software jobs might actually grow,” Thompson wrote. “An untold number of small firms around the country would love to have their own custom-made software, but were never big enough to hire, say, a five-person programmer team necessary to produce it.”

Permanent Underclass (Scenario 3)

The most frightening scenario is one in which AI disruption outpaces recovery velocity, hits every sector simultaneously, and encounters little pushback from policymakers. But this ignores that societal tumult usually isn’t due to unemployment, but people who are working yet still hungry, resulting in a loss of economic dignity and narratives to assign blame. If it sounds as if we’re already there, trust your instincts.

Inside Silicon Valley, the vibe is bleak. As Jasmine Sun wrote in the New York Times, “Most people I know in the AI industry think the median person is screwed, and they have no idea what to do about it.” Worse, many say that artificial general intelligence — a technology that may never materialize — will create a “permanent underclass.” That belief is fueling a last-chopper-out-of-Saigon mentality where people see a “limited window” to build wealth before AI and robotics fully replace human labor. I believe this is a consensual hallucination. Techno-narcissists have overindexed on the rapid advances in AI capabilities while completely ignoring … everything else.

Fear & Loathing

AI’s popularity is correlated to wealth, with only those earning more than $200,000 per year viewing AI as a net positive. That’s not a reflection on AI, but yet another signal that the incumbents (the old and the wealthy) have successfully hoarded opportunity. In other words, the AI jobs freak-out is the latest act in America’s ongoing wealth inequality drama. The Gini coefficient is how economists measure inequality: Zero indicates everyone has exactly the same wealth; a score of 1.0 means one individual owns everything. In the U.S., we’re higher than 0.8 — about the level seen when the French began separating people from their heads. The real disruption won’t come from AI, but from the public watching arsonists sell smoke detectors and call it innovation.

The AI job apocalypse isn’t an economic forecast — it’s a marketing strategy. We’re not witnessing the end of work. We’re watching the monetization of fear.

Life is so rich,

P.S. A few tickets remain for the Markets podcast tour. We’ll be recording live, with special guests, in San Francisco (sold out, sorry), Los Angeles, Miami, Chicago, and New York, where Anthony Scaramucci will join Ed and me on stage. Buy tickets here.

Scott you’re a genius and my great admiration is primarily your seeing reality behind the BS of wealth inequality which is a moral thing as much as a comic thing…a societal decision made by individuals. Thanks for this post

Scott, your argument seems to underweight the trajectory risk. It reads as if today’s AI systems, with their current jaggedness and failure modes, are roughly the systems we’ll be dealing with going forward. But people have been saying LLMs are about to hit a wall since the GPT-3 era, and the wall keeps moving.

Capabilities are not just improving, several measures suggest the pace of improvement has accelerated, especially with reasoning models. A year ago, many of my friends in tech thought AI was mostly hype. Then they were forced to use it in real workflows, and now many of them are openly worried about getting replaced in the next year or two.

The latest frontier models are also getting powerful enough that even the current administration, despite its deregulatory instincts, is moving toward pre-deployment government evaluations and reportedly considering formal review of new models before release. The U.S. and China are also reportedly exploring AI guardrail talks to prevent the rivalry from spiraling into crisis.

So I agree that “AI apocalypse” can be used as marketing. But dismissing the risk as mostly narrative seems like wishful thinking, and like a refusal to come to terms with the reality of the situation. It seems unwise to blindly assume today’s gaps are durable. No one has a crystal ball, but it is far from guaranteed that AI systems will remain this jagged while capability, reliability, scaffolding, and adoption keep advancing this quickly.

I wrote more about why I think the usual “technology always creates new jobs” argument breaks down here: https://alont.substack.com/p/what-happens-when-we-automate-our