OpenAI just experienced its first “week from hell”. First, the Wall Street Journal reported the company missed its targets for revenue and user growth in 2025. Then Sam Altman testified in the Elon Musk v. OpenAI trial. Then lawyers in California filed multiple lawsuits on behalf of victims’ families, alleging OpenAI’s negligence contributed to a school shooting in Canada that killed eight people, including six children. Not ideal.

None of these events are existential, but each hit on big questions that have long plagued the company: 1) Does the business actually work? 2) Is Sam Altman a liar? 3) Is ChatGPT dangerous to society?

It’s especially bad timing considering Sam Altman and OpenAI are reportedly eyeing an IPO in the second-half of this year at a valuation of up to $1 trillion. Usually when companies IPO you get questions like: Who’s underwriting? What’s the float? What are the lock-ups? Less common is for investors to be asking whether the CEO is a sociopath.

Which raises another crucial question: What will happen once this company actually is public? How will Wall Street react when they actually do know the ins and outs of this business? I put this question to Reid Hoffman, one of OpenAI’s earliest investors. He told me it’s not his job as a venture investor to obsess over the financials.

At that moment I realized OpenAI is in for it. For years, the only investors Sam Altman has had to please are the venture capitalists who care more about technology than economics. As soon as the company goes public, however, they’ll learn that what works in Silicon Valley doesn’t necessarily work on Wall Street.

Missed Expectations

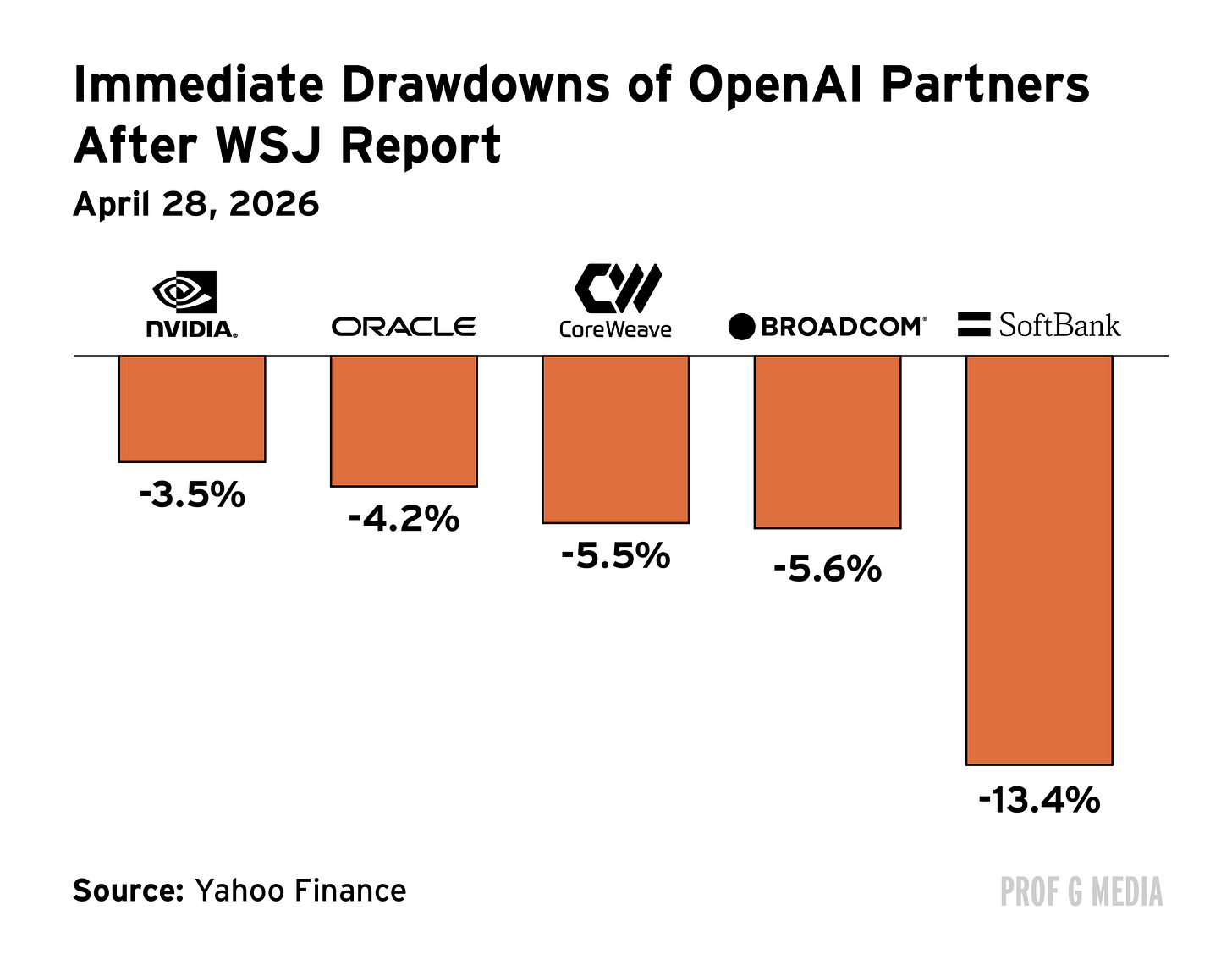

The big red flag for OpenAI last week wasn’t the Wall Street Journal’s report on the company, but the market’s reaction to it. Many of the largest companies in the world immediately took a beating. Nvidia fell more than 3%. CoreWeave fell 5%. Broadcom, nearly 6%. When you add up all the losses, the report resulted in a one-day erasure of roughly $350 billion.

That number should make you think the report revealed something terrible. But it really didn’t. All we learned is that OpenAI missed its revenue and user targets for 2025. Do we know how much they missed by? No. Do we know what their revenue target actually was? No. All we know is that they missed. And that was enough to knee-cap the Nasdaq.

Committed

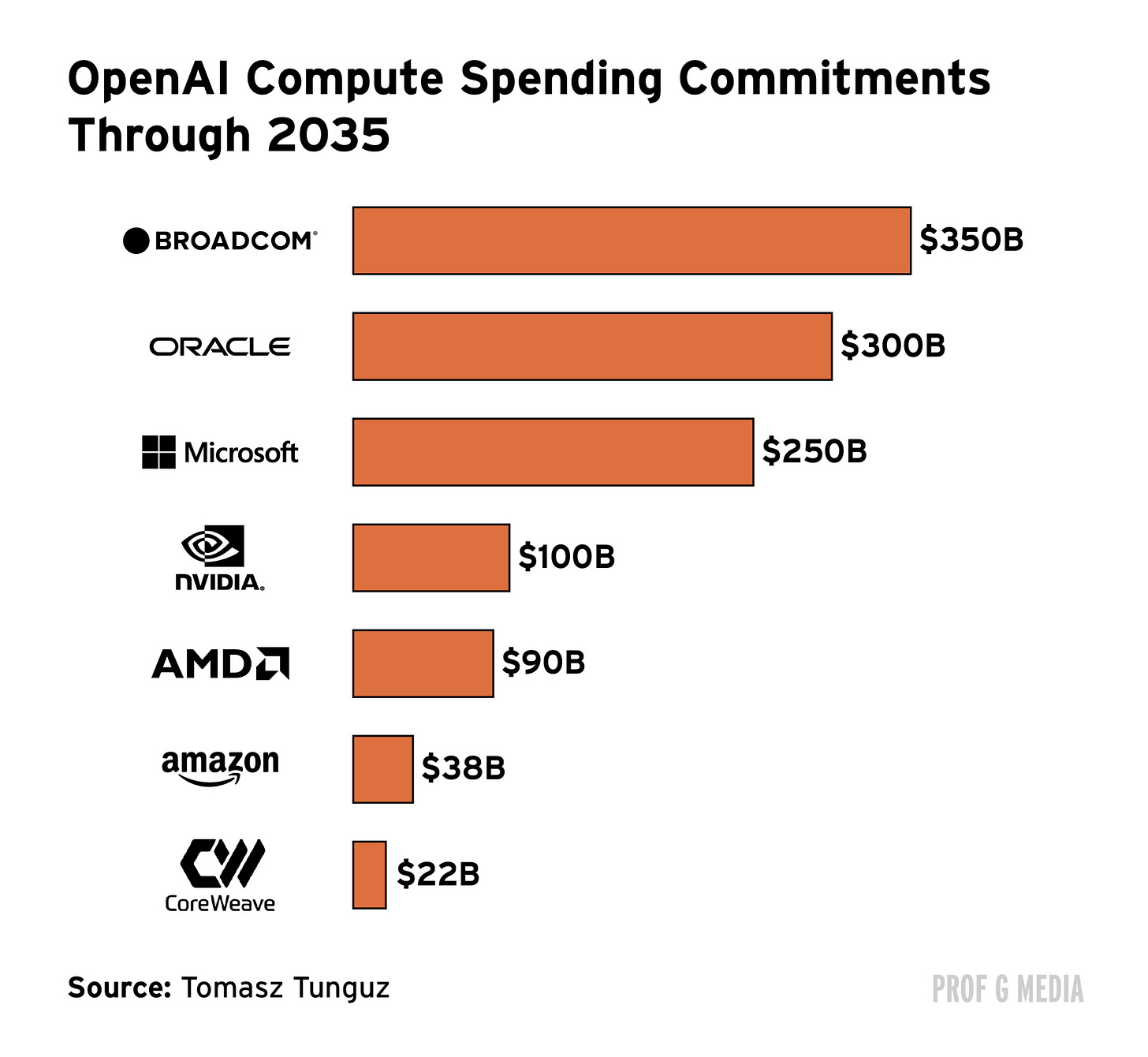

Before we get into what we don’t know about OpenAI, let’s establish what we do know. 1) We (sort of) know the company generated roughly $13 billion in revenue last year. 2) We know they generated $2 billion in revenue in a month this year. 3) We know they plan to spend $600 billion over the next five years. And 4) we know that over the next decade, that number will be roughly a trillion.

These numbers are vague and largely unhelpful, but let’s work with them. OpenAI’s spending will be allocated across seven main vendors: Broadcom, Oracle, Microsoft, Nvidia, AMD, Amazon, and CoreWeave. The Oracle deal requires OpenAI to hand over $60 billion a year starting in 2027. That’s more than Oracle’s entire 2025 revenue, and almost five times OpenAI’s. The AMD deal works in a similar way: AMD gave OpenAI a discount on its stock in exchange for a $90 billion GPU commitment, a bet that only pays off if OpenAI actually has $90 billion to spend. The Nvidia arrangement is the most circular: Nvidia invested $100 billion into OpenAI paid largely in chips, meaning Nvidia’s balance sheet is now hostage to the same growth story. The bottom line: The trajectory of all of these companies are now dependent on OpenAI achieving its goals.

But those goals are increasingly unrealistic. It’s estimated the company will need to grow revenue from $13 billion to more than $500 billion by 2029 just to cover its compute costs. That’s more revenue than any American company generated in 2025 aside from Amazon and Walmart. OpenAI has to do that without a retail business, and they have to do it in three years.

What We Don’t Know

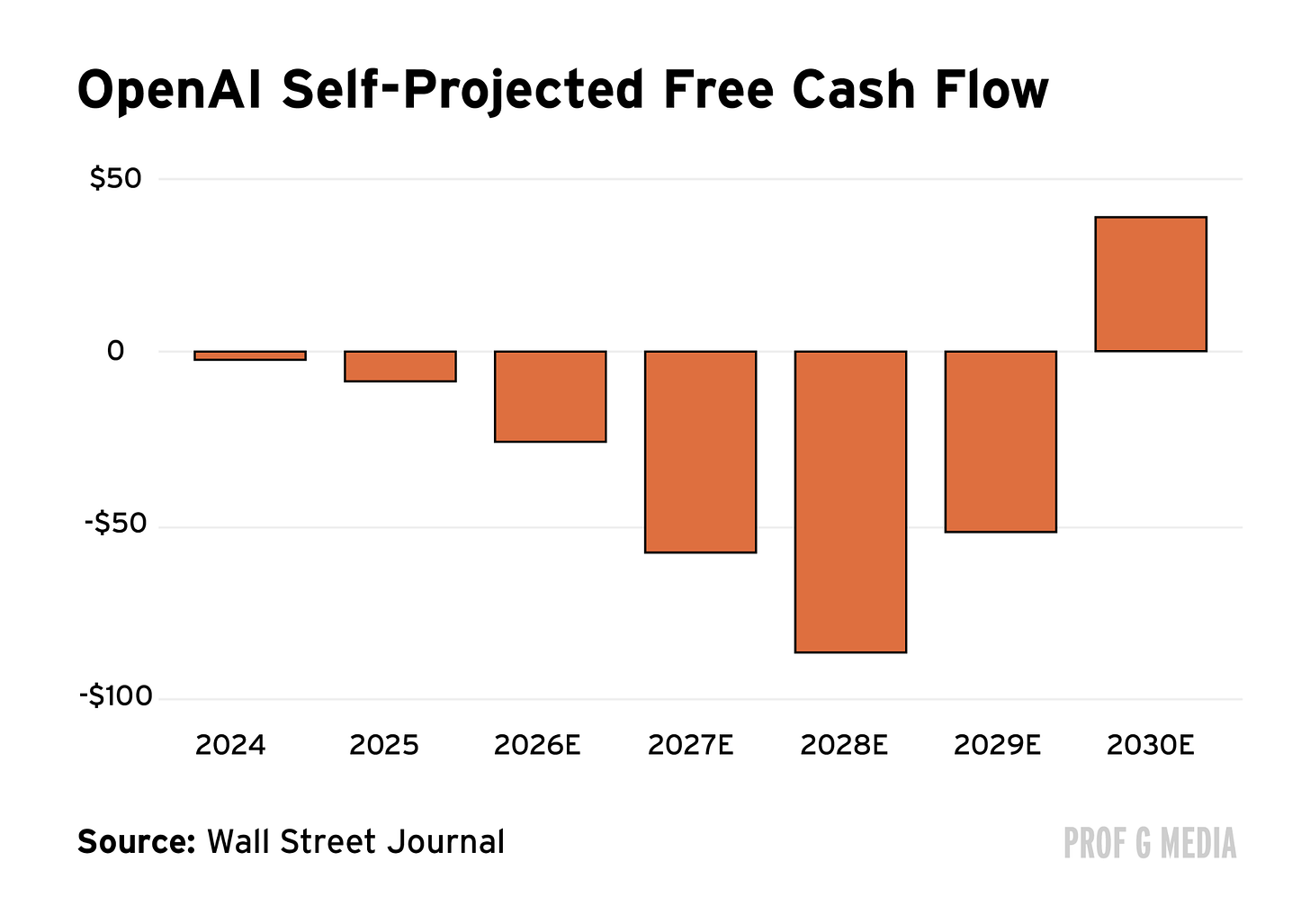

OK, now let’s talk precisely about what we don’t know. We don’t know OpenAI’s actual cost structure. We also don’t know its gross margins, its operating losses, or its burn rate with any precision. We don’t know how much it costs to run ChatGPT per user, or whether that number is improving. We don’t know whether its $25 billion in ARR is growing fast enough to justify an $850 billion valuation, because we’ve never seen an audited financial statement. While we do know their internal projections of profitability, we don’t know how realistic those projections are nor can we trust them, as the spending plans are constantly changing. We also don’t know if we can trust any of the other numbers, because according to an OpenAI board member, CEO Sam Altman is “unconstrained by truth.” In sum: We really don’t know anything about OpenAI.

If this were an ordinary startup that might not be a big deal. But this isn’t an ordinary startup; it’s a trillion-dollar startup, one on which the momentum of the entire global stock market largely depends. That was proven last week when one shred of arguably meaningless information erased $350 billion in a matter of hours. What will happen when we unearth something bigger?

The Reckoning

The nice thing about staying private is you don’t have to tell anyone anything. This is one of the reasons so few companies go public nowadays. But there’s a ceiling to how many $40 billion private rounds you can raise. Eventually, OpenAI will have to go public. And when they do, Sam Altman will be legally required to do what he’s supposedly avoided for many years: Tell us the truth. At that point, the markets (which have thus far priced off of podcast appearances and press releases) will have to contend with what the numbers actually say. That reconciliation is going to be ugly.

One of the most damning details is the fact that the company’s CFO, Sarah Friar, desperately does not want it to go public this year. It’s gotten to the point where she is now being reportedly actively excluded from investor meetings. A look at her background and you can understand why: She’s a McKinsey and Goldman alum who later wound up as the CFO of Block. In other words, she’s a Wall Street veteran … who knows what other Wall Street veterans will think.

Meanwhile, the Silicon Valley golden boy continues to post across the timeline about the future of artificial general intelligence. His latest area of interest: Algorithmic goblins. Has he told us anything of substance as it relates to the actual business? No. Does that matter? No, nothing matters compared to AGI.

It’s possible that OpenAI’s extraordinary burn will pay off. But the other version of the story — the one that last week’s news seemed to indicate — is that the entire thing is being held together by a collective agreement not to ask difficult questions. So far our questions have been avoidable, but eventually they won’t be. At that point we’ll learn which version of the story is true.

See you next week,

Ed

I have a feeling OpenAI won't IPO this year but maybe in 2027 (which they should've done anyway). I think Sarah Friar is right but unfortunately for OpenAI it's a problem when you combine Sarah's comments with everything else like a compressed IPO timeline, Sarah being excluded from important discussions (which is insane that the CEO and CFO aren't in the same room), Sarah not reporting to Sam, and other things. There's no business discipline, and there's a non-zero chance they'll get found out soon.

I can't wait to read their S-1!

Open AI--and the wider "generative AI"/LLM business--was always a case of "you can fool (nearly) all of the people, some of the time."

Just think about the last few years of mania: with its product-in-search-of-an-ACTUALLY-profitable-use-case, the massive burning of resources by a few tech bros with their fingers crossed to becoming being trillionaires, and a credulous public (including C-suite members...and dang, I've MET 'em in person) ready to believe anything.

But you can't fool everyone. And you certainly can't fool everyone, long term.

Many of us who actually know the systems from a technical standpoint (rather than the woo-woo/"CEO-said-a-thing" journalism) have always been deeply skeptical. Others, who are keeping an eye on actual real-world externalities (vs fantasies of apocalypse or paradise, lol), see the criminality and enshittification these systems have now enabled at scale, running rampant...alongside virtually no measurable benefit.

Tl;dr: a chatbot was never going to be your savior. Systems designed to mimic the cosmetic appearance of "thought" IN THE FORM OF LANGUAGE, even at amazing speed, were never going to produce "Gen AI."

I just hope the reckoning comes sooner rather than later. (Unstable systems jammed wholesale and untested into the entire modern digital infrastructure--just to satisfy the private curiosity (and greed) of a tiny elite--was never gonna end well...so the sooner the market cools on it, the better.)