Why Silicon Valley is hooked on Chinese AI tokens

Plus, the new law that has every foreign company in China quietly panicking

TL;DR

Why American AI Runs on Chinese tokens

Beijing’s new export control law leaves foreign companies guessing what’s legal

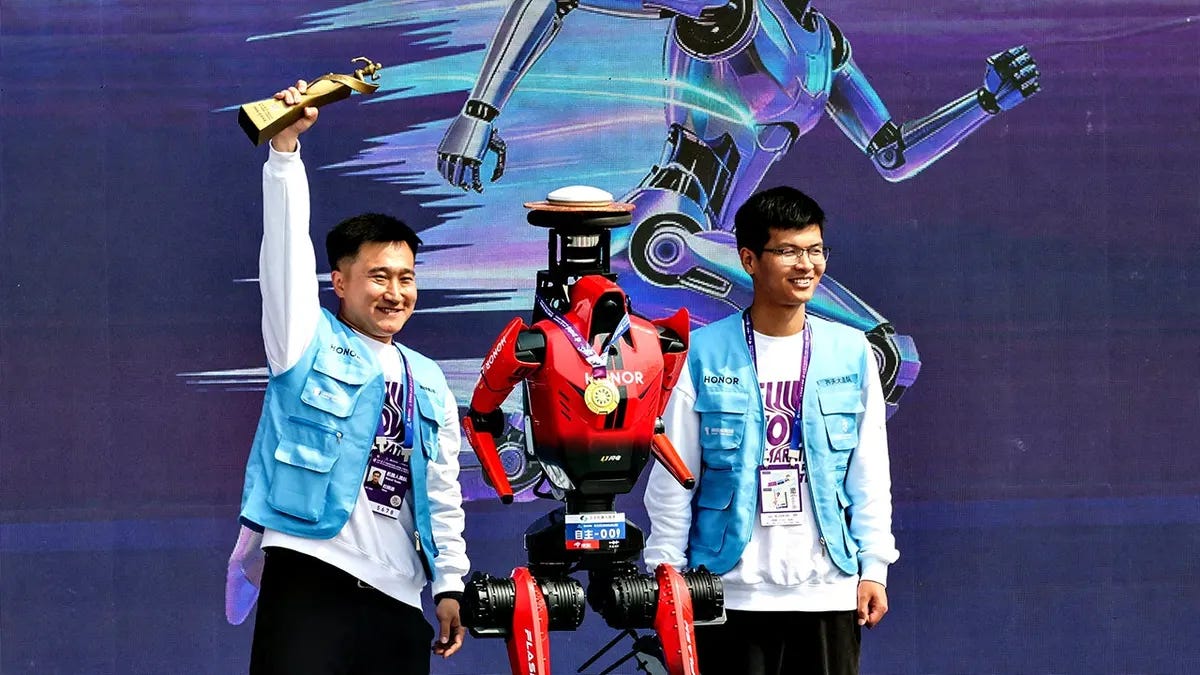

A humanoid robot just broke the half-marathon world record in Beijing

China Is Winning the AI Token Race

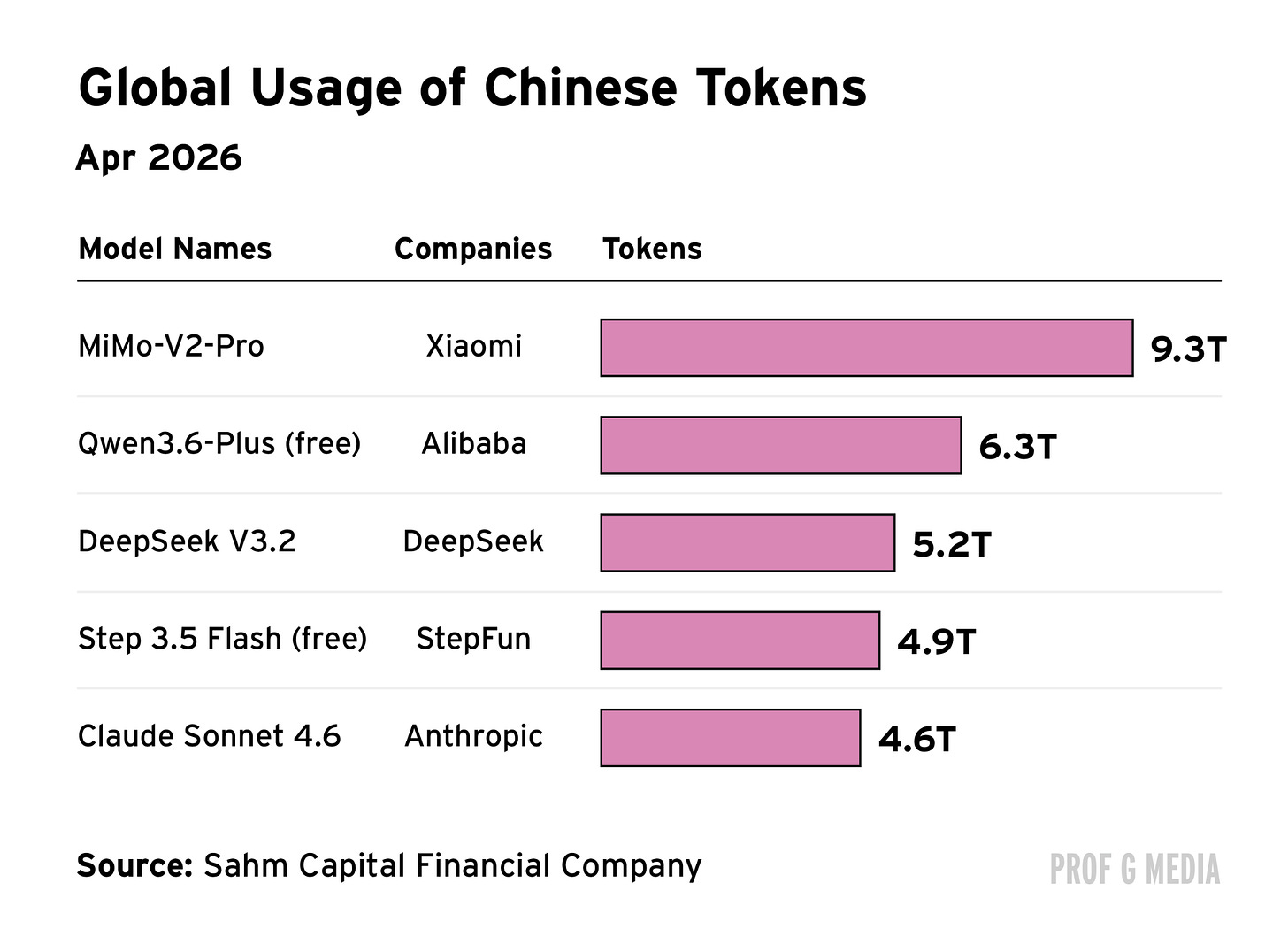

In a single week in February, Chinese AI models delivered 4.12 trillion tokens, while U.S. models delivered 2.94 trillion. That gap reflects a structural cost advantage that China has quietly built, and that Silicon Valley is quietly exploiting.

WTF Is … an AI Token

Tokens are the fundamental unit of AI output. Every question answered, every task an AI agent completes, burns through them.

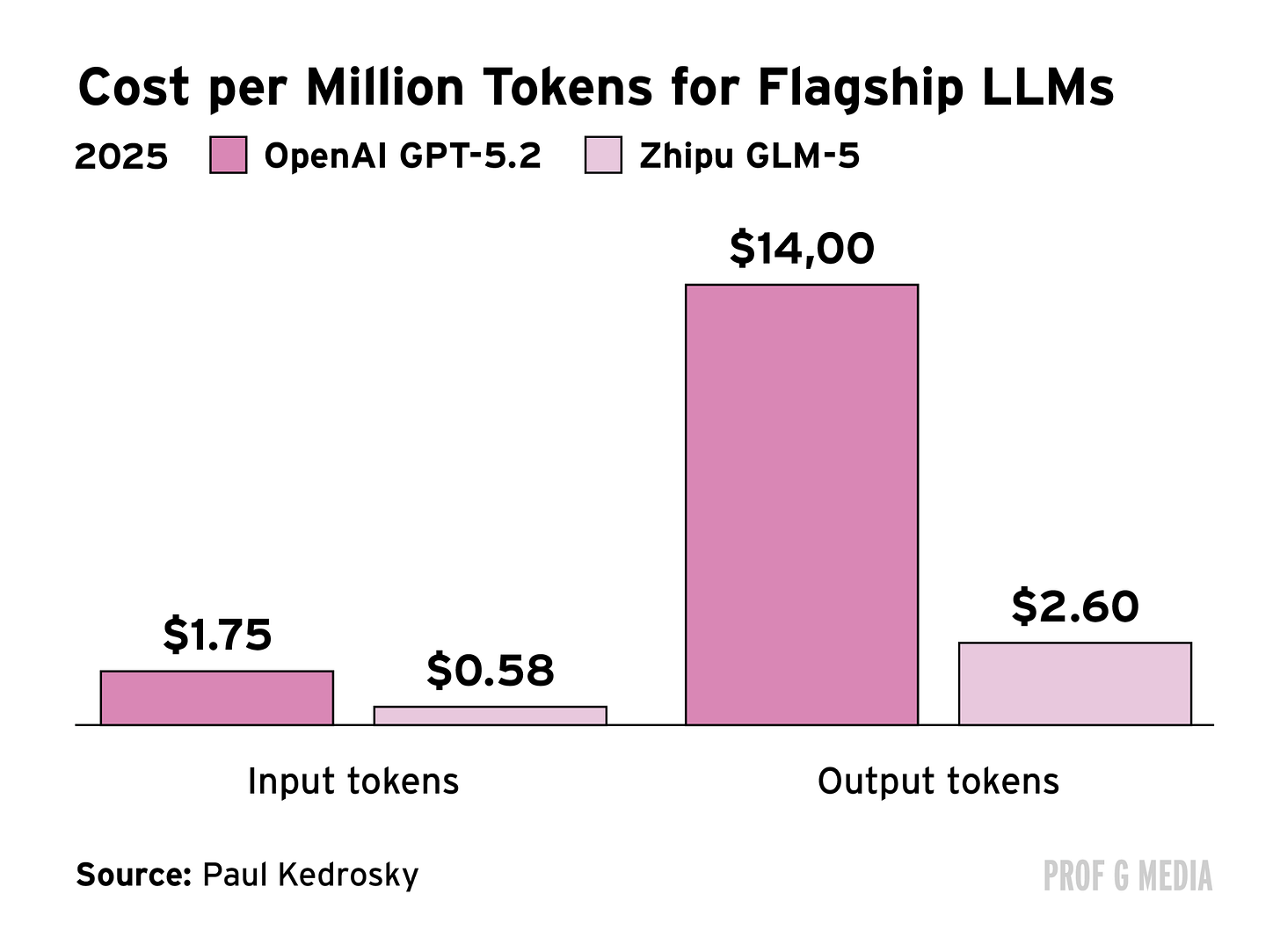

Chinese models like Minimax and Moonshot charge around $2 – $3 per million output tokens. Anthropic’s Claude Sonnet runs about $15. That 6x differential is why startups, including Airbnb, have turned to Chinese large language models (LLMs) to power their products. The AI is cheaper, and for many engineering teams, easier to fine-tune.

Two factors explain China’s cost advantage. First, electricity is significantly cheaper in China than in the U.S. Second, Chinese AI architecture uses a mixture-of-experts system that generates tokens with far less compute than comparable American models. That second point is, in part, a consequence of Washington’s own chip export restrictions, which forced Chinese labs to engineer their way around compute constraints.

As the AI landscape is shifting, this price difference begins to matter more. Agentic AI systems, which perform multi-step tasks rather than answer single questions, consume far more tokens than chatbots. As agentic AI goes mainstream, the premium on cheap token generation grows. China has a structural advantage in the commodity that powers the next generation of AI products, and that advantage is deepening.

Alice’s Take: We are in a gold rush, but it has a ceiling. My read is that Washington eventually follows the EV playbook: tolerate Chinese AI until it becomes politically untenable, then move hard. The Biden administration did it with EVs. The Trump administration will do it with AI, and when it does, it will not just be the LLMs in scope. It will be the agentic layer too. I would assign that scenario a high probability within two years.

What the EV story also shows, though, is that losing the U.S. market does not end China’s AI ambitions. Chinese EVs saw 140% year-over-year growth globally last month despite being locked out of America. Chinese AI companies will find the same markets. DeepSeek just announced external fundraising at a $10 billion valuation. The next story to watch will be whether foreign capital flows into Chinese AI at scale.

James’ Take: About $1.6 trillion has been invested in AI globally, with $250 billion last year alone. Any country that can produce the underlying commodity more cheaply than anyone else has a genuine structural advantage, and China has built exactly that.

The concern in Washington is legitimate because a Chinese LLM or agentic AI system operating in Silicon Valley is essentially unable to be regulated. The algorithm is in China. The staff are in China. The head office is in China. It is a genuine export of Chinese technology embedded in the most strategically critical sector of the U.S. economy. But unlike with a factory or a corporate entity, you cannot sanction software on the internet. Any developer can find and use a Chinese AI model with a basic search. I do not know how the U.S. stops that even if it decides it wants to.

Beijing’s New Export Control Law Has Foreign Companies Worried

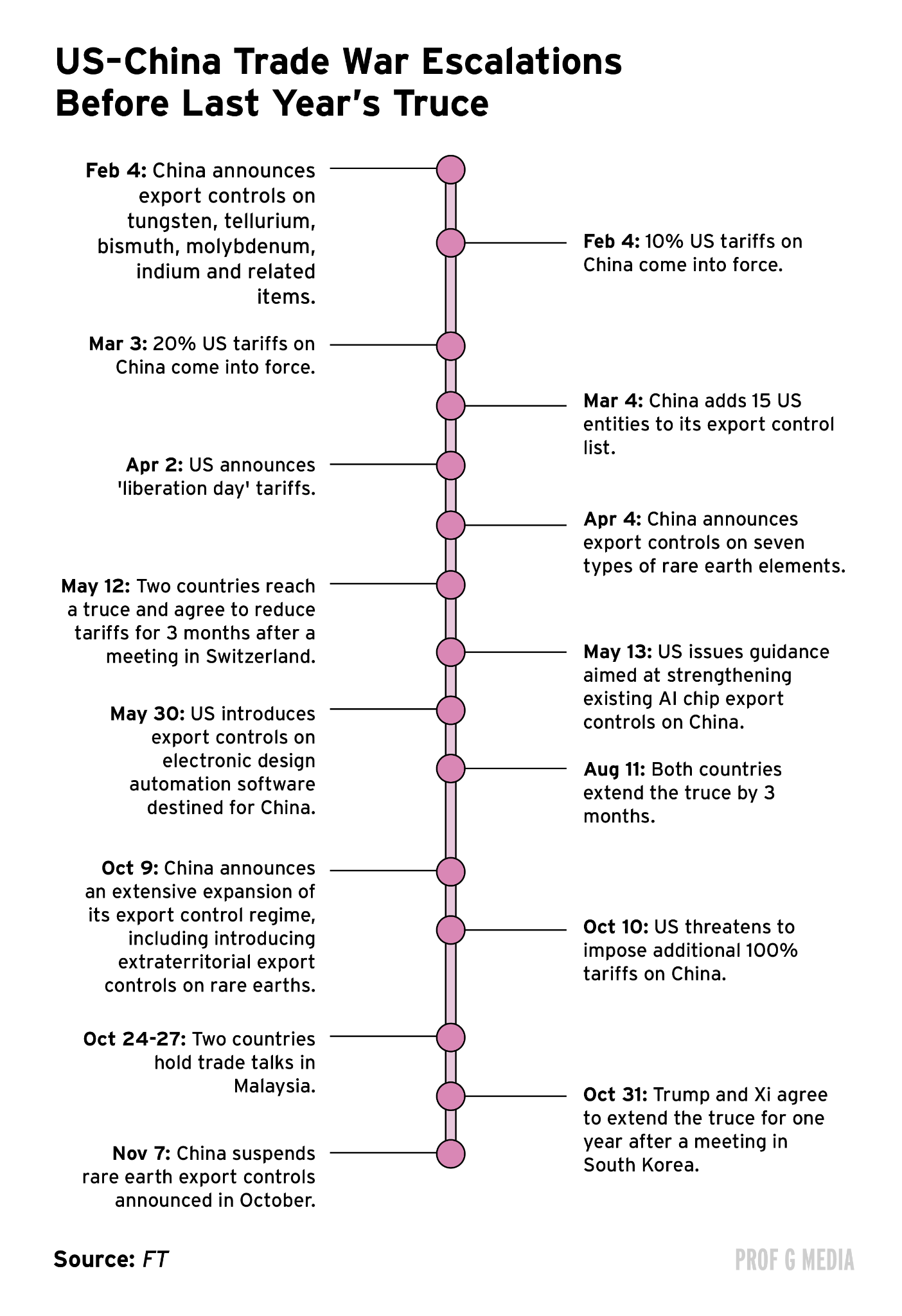

Last week, China published the State Council Regulations on Industrial and Supply Chain Security. The legislation is so vague that foreign legal teams cannot determine what it actually prohibits.

One article makes it illegal to “harm the security of the country’s industrial and supply chains.” Another bars companies from carrying out “information gathering activities related to industrial and supply chains in China.” A foreign company auditing its own supply chain, a routine practice globally, could theoretically be in violation.

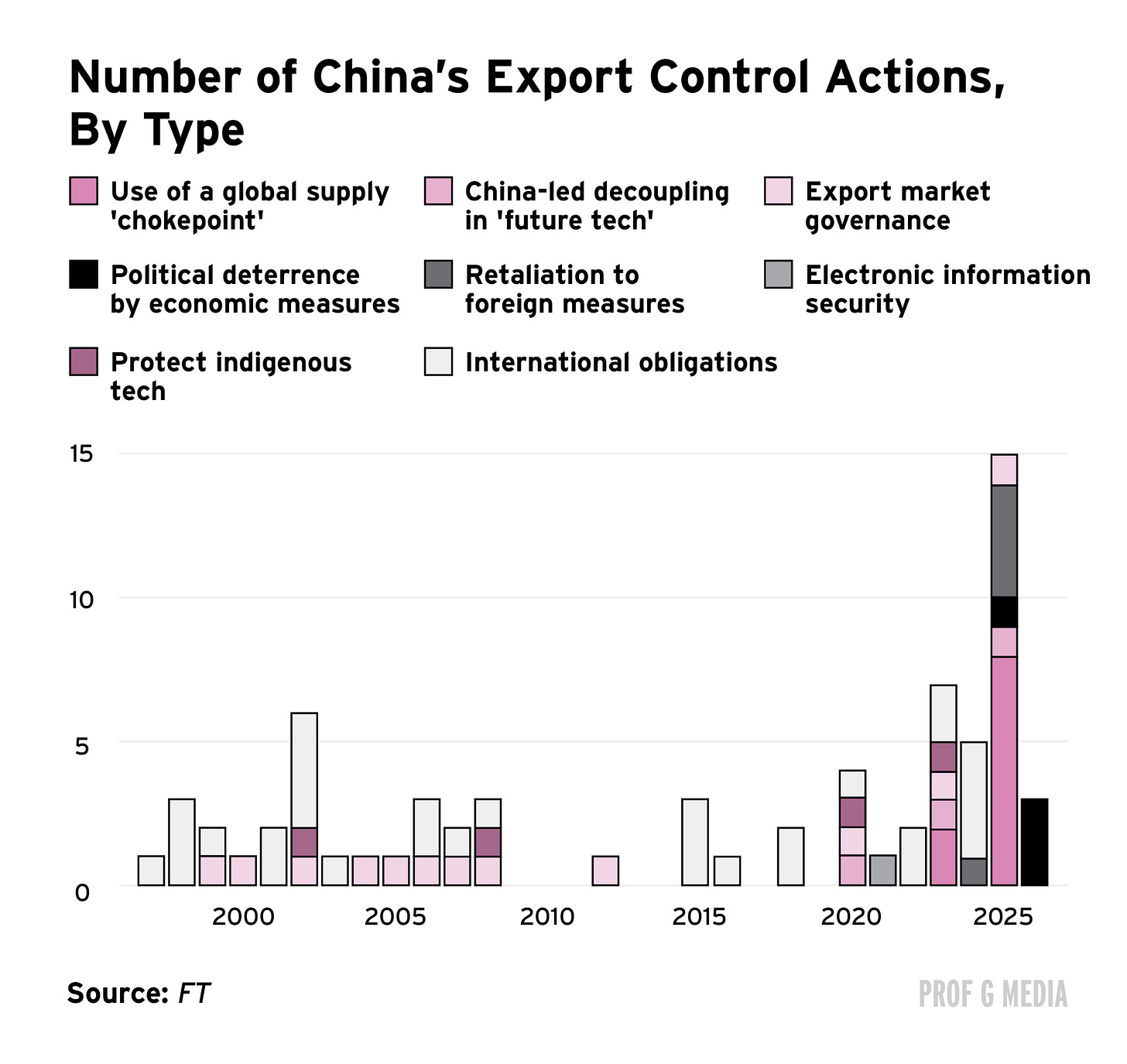

That vagueness is the context for a broader pattern. China has nearly tripled its use of export controls over the past five years.

Until now, most of those measures looked like tit-for-tat reciprocation. The U.S. restricted chips. China restricted rare earths. Going forward, the new legislation suggests something more deliberate: Beijing is building legal architecture around its supply chain leverage before the next round of U.S. restrictions arrives.

China produces roughly 60% of the world’s generic drugs, around 70% of legacy semiconductors, and 80% to 90% of rare earths. It accounts for approximately 80% of global solar panel components. With the world in month two of an oil shock triggered by the Strait of Hormuz blockade, a move to restrict solar exports would land even harder now than at any point in recent history.

Alice’s Take: China is no longer just copying the U.S. playbook. What started as a defensive posture has shifted to offensive. The rare earth controls last year went further than anything Washington has done: China said that anything sourced even 0.1% from Chinese heavy rare earths falls under its export licensing regime. That is not reciprocity. That is an attempt to lock in supply chain dominance across entire industries.

I sense China is now actively mapping which choke points it controls and building legal architecture around them. Whether that extends next to petrochemicals, commodities, or other sectors is the key question. However, it’s clear that this is a supply chain strategy, not a trade negotiation tactic.

James’ Take: The vagueness in the new legislation is the point. When nobody can tell you exactly what is illegal, the chilling effect on corporate behavior is enormous. Some of the largest multinationals in the world are looking at these rules and struggling to interpret them.

My read is that this is partly about building leverage ahead of a Trump-Xi summit expected in early May. China tends to do this. But the implications extend well beyond any single meeting. Beijing is showing Washington that the cost of confrontation is very high. It controls the choke points in sector after sector, and it now has legal tools vague enough to deploy against almost any target.

China May Be in the Golden Age of Innovation

Last month, a humanoid robot named Lightning won a half-marathon in Beijing. Lightning ran autonomously, without a remote control, navigating its own path. It ran 21 km in 50 minutes and 26 seconds, beating every human competitor in the mixed race and breaking the human world record by almost seven minutes. Jacob Kiplimo of Uganda holds that record at 57 minutes 20 seconds.

That result sits alongside a catalogue of Chinese technological firsts. A Shenzhen company called Ehang is piloting the world’s first commercial autonomous passenger flights using flying taxis. The T-Flight hyperloop train travels at up to 387 miles per hour using magnetic levitation, wheels never touching the track. Chinese automaker Seres holds a patent for an in-vehicle toilet that slides under the passenger seat. Chinese cars are already navigating potholes by jumping over them.

What distinguishes this moment from prior periods of Chinese manufacturing dominance is the combination of R&D and industrial application happening in the same geography. Hong Kong University of Science and Technology ranks first in China by patent influence, meaning patents that actually get used by industry, not just filed. The Greater Bay Area, spanning Guangdong, Hong Kong, and Macao, connects cutting-edge research directly to industrial-scale production. China files 1.8 million patent applications annually at the World Intellectual Property Organization, compared with just over 500,000 from the U.S.

Alice’s Take: Germany and Japan once had the same dual track of research innovation and industrial application. China has inherited it. What makes China unique right now is not just the volume of innovation but also the speed of application: strong R&D feeding directly into manufacturing at scale in the southern provinces.

That said, when it comes to cutting-edge technologies like AI, quantum, and biotech, the U.S. is still leading. Google DeepMind’s AlphaFold is the kind of world-class breakthrough that is still coming out of Silicon Valley, not Shenzhen. China is closing the gap on benchmark metrics, but for now the frontier is still American. The patent numbers point to a direction of travel, not a destination already reached.

James’ Take: I think China may be entering something like the golden age the U.S. had in the run-up to World War I, when American labs were producing the airplane, the light bulb, the telephone, the record player, and air conditioning in rapid succession. Some of what I saw on the ground is genuinely extraordinary. The humanoid robots, the flying taxis, the hyperloop. These are not incremental improvements.

The drone economy alone is off the charts. China is producing military and consumer drones at a scale and pace that has no parallel anywhere else. The question is no longer whether China can innovate. It is whether the West is paying close enough attention to what is already here.

China Decode Predictions:

Alice’s Prediction: The UAE is facing a liquidity crunch from the oil shock and is in discussions with Washington about currency swap lines. The People’s Bank of China agreed to a $5 billion currency swap with the Central Bank of the UAE in November 2023. When that agreement comes up for renewal in the next two years, I expect the figure to increase, and CNY usage in Middle Eastern FX reserves and trade settlement to grow with it. This is not dedollarization. It is a steady expansion of CNY presence in a region where the dollar has long been unchallenged.

James’ Prediction: The gap between the combined market cap of China’s top five tech companies and America’s top five will close over the next year. The U.S. top five, Nvidia, Alphabet, Apple, Microsoft, and Amazon, were worth $17.8 trillion last Friday. China’s top five, Tencent, Alibaba, CATL, Xiaomi, and PDD Holdings, were worth $1.48 trillion, roughly one-twelfth of the U.S. figure. If U.S. markets cool on prolonged inflation and rate uncertainty, and the AI premium in the Magnificent Seven starts to compress, that ratio shifts. I would expect something closer to one-tenth by this time next year.

Unfortunately, I think the article’s reading of China’s new supply-chain regulation could be somewhat misleading for multinational business leaders considering investment in China.

The first problem is that the piece frames the regulation as if it were simply an “export control law.” That is not quite accurate. Its formal title is the Regulations of the State Council on Industrial Chain and Supply Chain Security, and its scope is much broader than export control in the narrow sense. It is better understood as an umbrella framework that bundles together industrial security, information security, risk monitoring, emergency response, and countermeasure capacity. In other words, this is not just about adding one more layer of restriction at the export end. It is about formally elevating “industrial chain and supply chain security” into a governance system that can mobilize multiple agencies, monitor risks, issue warnings, conduct investigations, and impose countermeasures.

The second problem is that the article presents the regulation too much as if it were designed to indiscriminately intimidate all foreign firms. But the opening part of the regulation repeatedly stresses “coordinating development and security,” “advancing high-standard opening up,” and “promoting the stable and smooth operation of global industrial and supply chains.” It also explicitly says that China will “encourage and support enterprises” to diversify supply channels, carry out industrial and supply-chain cooperation, and participate in market competition on an equal footing. That suggests this is not a simple closure document. It reflects a dual objective: to remain open and keep supply chains functioning, while also institutionalizing stronger tools around security in key sectors, data security, and external countermeasures. Put differently, the message is: business is still welcome, but under conditions of security tension and geopolitical friction, the state intends to retain stronger intervention rights.

The third problem, and the most important one, is that the article interprets this mainly as China moving from reciprocal retaliation to proactive offensive action. But if you look at the text itself, the core logic of the regulation does not emerge independently of China-US friction. It is explicitly built on the existing legal architecture around national security and countermeasures. Article 14 directly addresses cases in which foreign states, regions, or international organizations impose discriminatory prohibitions, restrictions, or similar measures against China in the industrial and supply-chain sphere. Article 15 addresses cases in which foreign organizations or individuals interrupt normal transactions or adopt discriminatory measures that cause, or threaten to cause, substantial harm to China’s industrial and supply-chain security. In other words, this framework remains deeply embedded in the context of resisting external containment, extraterritorial coercion, and the weaponization of supply chains. It is not some entirely new offensive industrial weapon appearing out of nowhere. What is really happening is that China is taking the more fragmented countermeasure thinking of the past few years and turning it into a more administrative, procedural, and normalized system.

The fourth problem is that the article makes it sound as though a foreign company conducting its own supply-chain audit could inherently be illegal. But Article 13 does not ban all information-gathering related to industrial and supply chains. What it prohibits is conducting such investigations or information-collection activities in violation of Chinese laws, administrative regulations, departmental rules, or relevant state provisions. That distinction matters. It means the risk is not “auditing itself.” The real issue is whether activities such as auditing, due diligence, mapping, data transmission, supplier screening, or the organization of information in sensitive sectors cross the boundaries set by China’s existing rules on national security, data, surveying and mapping, counter-espionage, or personal information. So the real change is not that “all due diligence is now illegal.” The real change is that companies can no longer assume their globally standardized compliance, audit, and data-transfer procedures will automatically be treated as neutral in China.

Taken together, this regulation does not in itself mean China is about to arbitrarily launch a large-scale crackdown on foreign firms. The bigger shift is that it signals to all multinationals that operating in China can no longer rest on a clean separation between “commercial behavior” and “geopolitical behavior.” In the past, many firms believed they were simply doing compliance work, audits, or supply-chain adjustments. Now those same actions may increasingly be reinterpreted through the lens of national security and industrial security.

It is right to identify why Silicon Valley is becoming increasingly drawn to Chinese AI: China is turning low-cost token generation into a structural advantage. Some Chinese models charge only around $2 to $3 per million output tokens, versus roughly $15 for Anthropic’s Claude Sonnet, a gap of nearly 6x. That cost differential is already pushing some U.S. companies, including Airbnb, to adopt Chinese large models.

That said, this post’s understanding of this advantage is still incomplete. It argues that one reason is lower electricity prices in China, when in fact U.S. industrial electricity prices are generally lower than China’s. Its second point is more convincing: China’s embrace of the MoE path did emerge partly under conditions of compute constraint, and those constraints do force companies to focus more intensely on inference efficiency, model compression, and unit-cost optimization. But the problem is that MoE is not a uniquely Chinese method, nor is it a technological path that naturally implies durable Chinese leadership. It is better understood as a tool that, at least for this stage, amplifies China’s engineering strengths.

I would add two points.

First, this should not be read too optimistically for China AI. What is truly worth paying attention to is not simply that Chinese models are cheaper. It is that China may be developing a broader system-level capability to keep driving inference costs down while diffusing AI rapidly into real industrial and commercial use cases. But that is still very far from proving that the global center of AI power has already shifted. In reality, AI competition needs to be broken down into at least four layers. The first is frontier model capability: who is closest to the technological frontier. The second is inference cost: who can produce tokens more cheaply. The third is systems integration: cloud infrastructure, networking, memory, packaging, power, and data-center orchestration. The fourth is the application ecosystem: whether these capabilities can actually be absorbed by enterprises and consumers at scale. Frontier models, the compute foundation, cloud platforms, enterprise gateways, and global capital markets still remain, to a very large extent, in American hands today. I discussed this in an earlier post as well.(https://leonliao.substack.com/p/low-cost-open-models-are-squeezing?r=731anr&utm_medium=ios)

Second, cheap tokens do not automatically mean value capture will accrue to China. In the AI value chain, the party that produces tokens, the party that consumes them, the party that defines application-layer standards, and the party that controls customer relationships are not necessarily the same. Even if Chinese models provide cheaper inference in certain scenarios, the entities that ultimately capture the profit pool may still be American platform companies, cloud providers, enterprise software firms, or application-layer players that control distribution. This is no different from manufacturing: the lowest-cost producer does not necessarily capture the highest profits. What ultimately determines profit distribution is system control, branding, access points, standards, and customer stickiness. I have discussed that in another post as well.(https://leonliao.substack.com/p/chinas-token-exports-are-explodingwhat?r=731anr&utm_medium=ios)